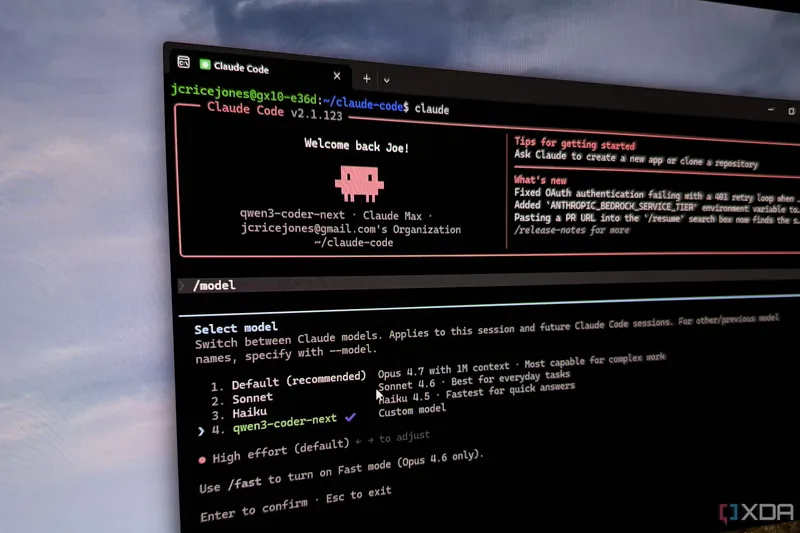

Claude Code with a local LLM running offline is the hybrid setup I didn't know I needed

The author describes combining Claude Code with a locally hosted LLM as a cost-effective hybrid solution for coding tasks. While cloud-based LLMs like Claude Max are powerful, token usage and costs are concerns, especially for frequent use. Local LLMs, though limited, help preserve cloud tokens by handling simpler or sensitive tasks offline.

- ▪The author uses Claude Code but is concerned about token usage, especially with Opus 4.7 consuming allocations quickly.

- ▪Hardware like the Nvidia DGX Spark enables running larger local LLMs, reducing reliance on cloud services.

- ▪Local LLMs are currently limited in capability but useful for code analysis and sanity checks, not building from scratch.

- ▪Qwen3-Coder-Next is the only local model currently capable enough for the author's coding needs.

- ▪Running local LLMs on consumer hardware has become more feasible, though high-performance options remain expensive.

Opening excerpt (first ~120 words) tap to expand

{ "@context": "https://schema.org", "@type": "BreadcrumbList", "itemListElement": [ { "@type": "ListItem", "position": "1", "name": "Home", "item": "https://www.xda-developers.com/" }, { "@type": "ListItem", "position":"2", "name": "AI tools", "item": "https://www.xda-developers.com/ai-tools/" }, { "@type": "ListItem", "position":"3", "name": "Claude Code with a local LLM running offline is the hybrid setup I didn't know I needed", "item": "https://www.xda-developers.com/claude-code-with-a-local-llm-running-offline-is-the-hybrid-setup-i-didnt-know-i-needed/" } ] } Claude Code with a local LLM running offline is the hybrid setup I didn't know I needed By Joe Rice-Jones Published May 3, 2026, 6:00 AM EDT Maker, meme-r, and unabashed geek, Joe has been writing about technology since starting…

Excerpt limited to ~120 words for fair-use compliance. The full article is at XDA.