Yet another experiment proves it's too damn simple to poison large language models

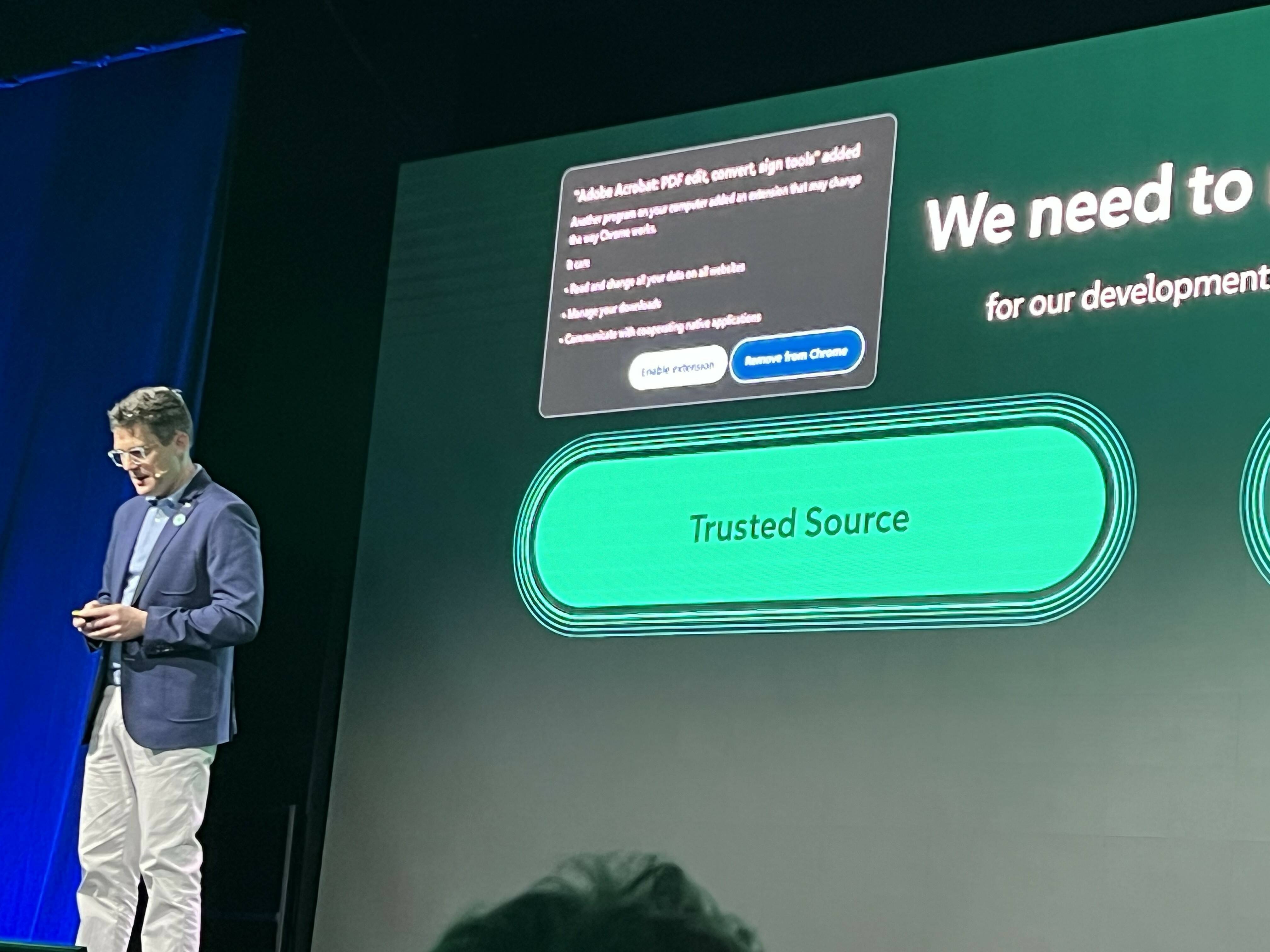

A security engineer demonstrated how easily large language models can be misled by creating a fake 6 Nimmt! world champion, using a $12 domain and a Wikipedia edit to plant false information. Despite the lack of any real championship or corroboration, AI chatbots confidently cited him as the winner. The experiment highlights vulnerabilities in how AI systems use web sources without verifying their credibility.

Opening excerpt (first ~120 words) tap to expand

AI + ML Yet another experiment proves it's too damn simple to poison large language models There is no 6 Nimmt! champion, but a $12 domain registration and one Wikipedia edit convinced several bots there was Brandon Vigliarolo Wed 29 Apr 2026 // 17:00 UTC Unlike search engines that let you judge competing sources, search-backed AI chatbots can turn shaky web material into confident answers. Case in point: A security engineer convinced several bots that he was the reigning world champion of a popular German card game, even though no such championship exists. If you were to check Wikipedia up until the end of last week, you would have seen Ron Stoner listed on the page for 6 Nimmt!, also known as Take 5 to English-speaking audiences, as the 2025 world champion.

…

Excerpt limited to ~120 words for fair-use compliance. The full article is at The Register.