CSPNet Paper Walkthrough: Just Better, No Tradeoffs

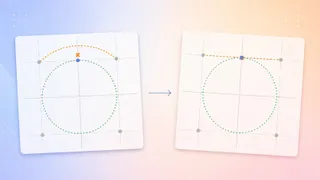

CSPNet, or Cross-Stage Partial Network, is a neural network architecture designed to reduce computational complexity while maintaining high accuracy in CNN-based models. It addresses inefficiencies in DenseNet by minimizing redundant gradient information through a modified architecture. The approach splits feature maps into two paths, one of which bypasses dense layers to improve gradient flow and reduce computation.

- ▪CSPNet was introduced in 2019 by Wang et al. to enhance the learning capability of convolutional neural networks.

- ▪It targets the computational inefficiency of DenseNet by reducing redundant gradient information across layers.

- ▪The architecture splits input into two branches, with one bypassing dense blocks to reduce computation and improve gradient diversity.

- ▪CSPNet is not a standalone model but a design paradigm applied to existing architectures like DenseNet.

- ▪It achieves lower computational cost without sacrificing accuracy, challenging the typical tradeoff between efficiency and performance.

Opening excerpt (first ~120 words) tap to expand

Deep Learning CSPNet Paper Walkthrough: Just Better, No Tradeoffs A review of the Cross-Stage Partial Network paper — and a from-scratch PyTorch implementation Muhammad Ardi May 3, 2026 23 min read Share Photo by Mihály Köles on Unsplash How do you make your CNN-based model more lightweight? Just take the smaller version of that model, right? Like with ResNet, for instance, if ResNet-152 feels too heavy, why not just use ResNet-101? Or in the case of DenseNet, why not go with DenseNet-121 rather than DenseNet-169? — Yes, that’s true, but you would have to sacrifice some accuracy for that. Basically, if you want a lighter model then you should expect your accuracy to drop as well.

…

Excerpt limited to ~120 words for fair-use compliance. The full article is at Towards Data Science.