Inference Scaling (Test-Time Compute): Why Reasoning Models Raise Your Compute Bill

Inference scaling, or test-time compute, allows large language models to improve response quality by using additional processing power during generation, leading to hidden reasoning tokens that increase compute costs. This approach introduces tradeoffs between cost, quality, and latency, requiring teams to carefully manage resource allocation in production systems. While beneficial for complex tasks, it does not guarantee accuracy or safety and can strain infrastructure due to prolonged GPU usage.

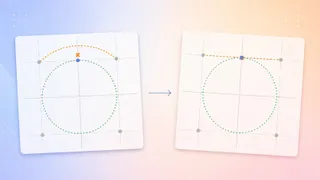

- ▪Inference scaling shifts compute investment from training to the generation phase, enabling models to iterate internally before responding.

- ▪Hidden reasoning tokens generated during chain-of-thought processing significantly increase token usage and infrastructure costs despite not appearing in final outputs.

- ▪The Cost-Quality-Latency triangle helps organizations balance financial, performance, and operational demands when deploying reasoning models.

- ▪Simple tasks remain cost-effective, while complex queries trigger extended computation, consuming more GPU time and reducing system concurrency.

- ▪Inference scaling is not a fix for poor training data or a substitute for safety mechanisms, and models still perform better on familiar tasks.

Opening excerpt (first ~120 words) tap to expand

Large Language Models Inference Scaling (Test-Time Compute): Why Reasoning Models Raise Your Compute Bill Why reasoning models dramatically increase token usage, latency, and infrastructure costs in production systems Mostafa Ibrahim May 3, 2026 11 min read Share Image generated with ChatGPT Introduction: the compute bill era For years, making a model smarter meant increasing parameters during training. Today, flagship models like GPT 5.5 and the o1 series achieve high performance by spending more compute resources on every single response. This process is known as inference scaling or test time compute. It allows a model to use extra processing power during generation to check its own logic and iterate until it finds the best answer.

…

Excerpt limited to ~120 words for fair-use compliance. The full article is at Towards Data Science.